Mobile app development

DevOps practices

UI testing

Cross-platform development

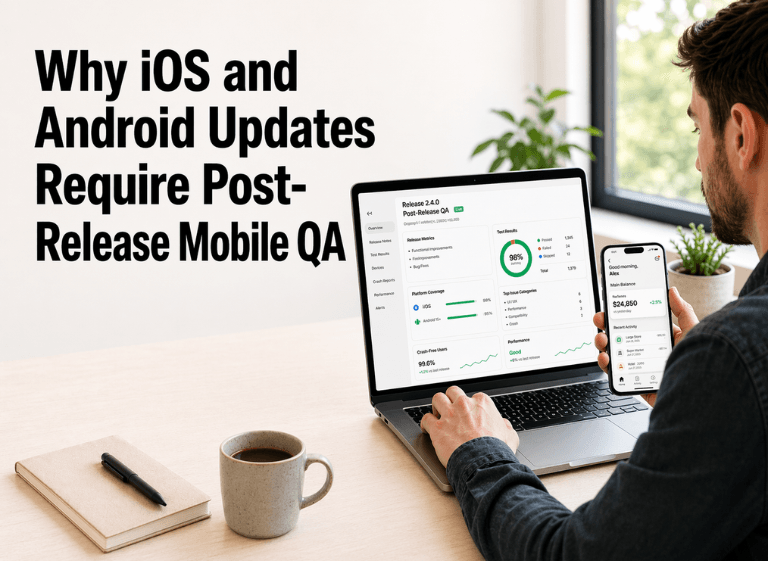

Why iOS and Android Updates Require Post-Release Mobile QA

Iliya Timohin

2026-04-24

The assumption that strong pre-release testing guarantees long-term app stability rarely holds in the real mobile ecosystem. Even when your product code stays unchanged, the environment around it keeps moving: operating systems evolve, permissions shift, runtimes change, and build tools introduce new behavior. That is why post-release mobile QA matters. Mobile app regressions after updates often appear only after changes reach real devices, which is why mobile app testing after an iOS update or Android update cannot stop at pre-release confidence alone.

Why iOS and Android Updates Trigger Mobile App Regressions

iOS and Android updates change more than visual interface details. They can alter system services, permission behavior, background execution, rendering logic, and resource handling in ways that conflict with an app that previously looked stable. For product teams, that makes post-update regressions a product-quality problem, not just a testing inconvenience. This section explains where platform changes break core flows, why pre-release confidence is often weaker than teams assume, and why mobile app regression testing has to extend into post-release validation.

Where OS changes break critical app flows

Mobile apps do not run against a static environment. The iOS 26.4 release notes explicitly tell teams to update their apps and test against API changes, which is a strong reminder that platform behavior itself can shift underneath an already released product. The same applies on Android, where OS updates can affect gesture behavior, permission prompts, keyboard handling, background resume, and the way UI elements render on specific devices.

These issues are most dangerous when they affect critical flows that users treat as basic expectations: logging in, moving through navigation, completing a payment, restoring a session, or resuming the app after a system interruption. A product can look stable in controlled QA and still fail under live platform conditions once the update reaches real users.

Why release testing misses post-update risk

Pre-release testing is still necessary, but it rarely reproduces the full conditions created by a live OS rollout. Controlled environments usually cover a narrower device set, cleaner app states, and more predictable update paths than production, which leaves app testing after an OS update exposed to real-world gaps. Even strong UI test automation cannot fully simulate how an installed app behaves after firmware, permission, runtime, or system-service changes.

Blind spots of pre-release testing

- Simulator specifics: Software imitation does not reproduce the exact behavior of drivers, power states, and hardware interactions after a real firmware update.

- Cascading updates: An OS change often forces dependency, SDK, or system-library changes that introduce new compatibility risk.

- Migration scenarios: Regressions often appear while preserving user data, restoring sessions, or moving an installed app from one platform version to another.

- Fragmented rollout conditions: The first regressions may appear only on specific devices, OS subversions, or permission states that were not part of the original validation set.

Where Mobile App Regressions Appear After Updates

Regressions after iOS and Android updates usually appear where users feel them first: login, forms, navigation, checkout, permissions, and state changes. These flows matter because even a small failure can block a core business action. Mature teams do not try to validate everything equally after an update. They start with the places where product continuity, revenue, or trust is most exposed.

Login, forms, navigation, and checkout friction

The most visible post-update issues tend to appear in interface-heavy flows. Changes in system bar behavior, gesture handling, field focus, keyboard presentation, or tap-target sizing can make previously stable actions harder to complete. That is why strong mobile-first UX still matters after release: small screen friction becomes a product problem quickly when a user cannot log in, move through navigation, submit a form, or finish a payment.

These regressions do not always look dramatic in engineering terms. A shifted button, inconsistent keyboard behavior, or broken checkout confirmation can still create measurable business damage if the issue appears in a high-frequency flow.

Background, permissions, and device-state failures

Not all post-update regressions are visible on screen. Background resume, permission handling, lock and sleep transitions, notification behavior, and device-state changes can fail quietly until users start reporting broken sessions, missing pushes, interrupted sync, or lost continuity. The <a href="https://developer.android.com/docs/quality-guidelines/core-app-quality" target='_blank" rel="noopener noreferrer">Core app quality guidelines reinforce the need to validate current Android behavior, a representative device set, and real flow continuity rather than relying on narrow lab assumptions.

This is also where live feedback becomes useful. Teams often catch these failures faster when they combine monitoring with support patterns, real-user complaints, and product-usage anomalies that appear after the update reaches production.

Update Validation Matrix

| Update type | What can break | What to validate first | What to monitor after release |

|---|---|---|---|

| iOS or Android OS update | UI rendering, gestures, keyboard behavior, permissions, background resume, device-state behavior | Sign-in, session restore, navigation, forms, checkout, push permissions, lock/sleep/resume flows | Crash spikes, ANR growth, login failures, permission issues, device-specific regressions |

| Xcode update | Build behavior, archive/export flow, asset delivery, simulator stability, diagnostics behavior | Clean build, archive/export, asset packs, app launch, CI pipeline, real-device install | Failed builds, startup issues, asset download errors, release pipeline instability |

| React Native update | JS runtime behavior, native module compatibility, rendering, navigation, third-party package behavior | App launch, critical user flows, native integrations, JS-heavy screens, package compatibility | Crash rate, memory issues, rendering regressions, device-version-specific issues |

| Device-specific update | Driver conflicts, thermal throttling, layout shifts, orientation bugs, state restore issues | Login, forms, gestures, orientation changes, background/foreground transitions, state restore | Device-model-specific crashes, ANRs, UI complaints, support tickets from affected devices |

| SDK or dependency update | Auth, analytics, payments, push delivery, deep links, background tasks | Login, payments, tracking events, push open flow, deep links, background sync | Third-party error spikes, conversion drops, broken events, silent failures |

Priority flows after updates

- Authentication, login recovery, and multi-factor authentication: Verify sign-in, re-authorization, password reset, multi-factor authentication steps, and session restore after the update.

- Forms, keyboards, and validation states: Recheck field focus, keyboard behavior, autofill, input masking, validation errors, and submission logic.

- Navigation and deep links: Confirm gesture behavior, back navigation, tab transitions, deep-link entry points, and return paths into the app.

- Checkout, payments, and confirmation flows: Revalidate payment confirmation, checkout confirmation, purchase completion, and failure recovery.

- Permissions, push prompts, and app-open actions: Review permission requests, push-delivery prompts, notification taps, and app-open behavior after policy changes.

- Lock, sleep, resume, and background continuity: Test sleep, lock, resume, background sync, interrupted sessions, and state persistence on real devices

Why Xcode and React Native Updates Change QA Risk

BLUF: Mobile regressions are not triggered only by the operating system. Toolchain and framework updates can change how the app is built, packaged, and executed, even when product functionality looks unchanged at a feature level. That makes Xcode and React Native changes part of QA risk, not just engineering housekeeping. This section shows why Xcode update testing and React Native update testing deserve validation on the same level as platform updates.

Toolchain changes that affect builds and assets

Toolchain changes can alter build outputs, archive behavior, startup conditions, and asset delivery in ways that are hard to predict from release notes alone. The Xcode 26 release notes are a good example: they show that build and diagnostic behavior itself can shift, which means Xcode update testing should cover clean builds, final packages, app launch paths, and asset-related behavior after the update.

This risk is easy to underestimate because it does not always look like a product change. But if the app starts differently, assets fail to load, or build behavior changes for certain targets or devices, users still experience the result as a product problem.

Framework changes that affect runtime behavior

Framework updates can introduce just as much instability as OS changes, especially in cross-platform products. The React Native 0.84 release is not just a version bump: it makes Hermes V1 the default, ships precompiled iOS binaries by default, and continues the removal of legacy architecture code. Those changes affect runtime behavior, build defaults, and compatibility assumptions that may not fail visibly until the updated framework meets a live platform environment. In practice, React Native update testing has to cover launch behavior, rendering, native integrations, and the flows most exposed to runtime or dependency shifts.

That is why teams working on React Native projects need more than upgrade confidence. They need focused validation of launch behavior, rendering, native integrations, and the flows most exposed to runtime or dependency shifts.

What official signals make post-update QA necessary

The strongest case for post-release QA does not come from generic testing advice. It comes from platform and maintainer sources that show how updates change behavior across APIs, tooling, runtimes, and rollout quality signals. Looked at together, these signals make it clear that post-update validation is not optional process overhead. It is a direct response to documented platform change.

| Source | Concrete signal | Why it matters for QA |

|---|---|---|

| iOS 26.4 release notes | Apple explicitly tells teams to update apps and test against API changes | Confirms that post-update validation is expected after platform changes, not treated as an edge case |

| Xcode 26 release notes | Apple documented Background Assets and simulator-related changes in the release cycle | Shows that toolchain updates can affect packaging, diagnostics, and test behavior, not only app code |

| React Native 0.84 release | Hermes V1 became the default and precompiled iOS binaries now ship by default | Framework updates can change runtime and build behavior even when app features appear unchanged |

| Android vitals | User-perceived crash rate and user-perceived ANR rate are treated as core rollout signals | Post-release monitoring should focus on user-facing stability, not only raw issue counts |

What Post-Release Signals Reveal Mobile App Issues

Production data is the part of QA that shows whether an update is safe under real rollout conditions. Post-release validation becomes useful only when teams connect technical signals with platform versions, device clusters, and user-facing business friction. This is where mobile app crash monitoring and rollout validation stop being passive reporting and start acting as part of quality control.

Crash and ANR patterns after platform updates

Crash spikes and ANR growth after a platform or framework update are not random technical noise. They often point to conflicts in resource handling, execution paths, or runtime behavior that did not surface before release. Android vitals is especially useful here because it highlights user-perceived crash rate and user-perceived ANR rate as core signals that matter after rollout, not just general diagnostic metrics.

Teams need these signals mapped to platform context. A regression cluster tied to one OS version or one device family is more useful than a raw aggregate issue count, especially when production regressions need prioritization.

Rollout spikes, device clusters, and support signals

The most useful post-release signals usually appear in combination rather than in isolation. A sudden spike in crashes, repeated issues on one device cluster, growing cold-start delays, and support complaints tied to login, navigation, or checkout all point to the same reality: the update has introduced live regression risk.

Post-release monitoring signals

- Crash rate and ANR growth: Sudden increases tied to a specific OS version, rollout wave, or device cluster.

- Device-model clusters: Repeated failures concentrated on one device family, firmware branch, or hardware configuration.

- Rollout spikes: A sharp change in errors or degraded behavior immediately after a staged rollout expands.

- Permission-related failures: New issues tied to prompts, denied access states, notification delivery, camera, location, or system settings.

- Login and checkout drop-offs: Visible degradation in authentication, session restore, purchase completion, or payment confirmation.

- Support-ticket patterns: Repeated user complaints that map to the same update, device type, or high-value flow.

- Third-party integration instability: New failures in payments, auth, analytics, notifications, or deep links after a dependency or SDK shift.

Why Mature Teams Treat Update Validation as Ongoing QA

Mature teams do not treat post-release QA as a one-time safety step after a platform change. They treat it as part of continuous product-quality control because mobile stability depends on changing OS behavior, device diversity, and the speed of real-world feedback. This is what separates reactive debugging from a repeatable validation discipline.

Post-release QA as product quality control

For mature products, post-release validation is not a cleanup phase after launch day. It helps teams connect platform changes with product health, prioritize quality issues early, and decide whether a rollout is stable enough to continue. In practice, it turns update validation into a product decision layer rather than a narrow QA follow-up. Teams that build this discipline well usually combine monitoring with post-launch signals that reveal how real users experience the update after rollout.

Real-device checks beyond pre-release confidence

Real-device validation matters because simulators, emulators, and narrow device sets do not reproduce all the conditions that shape live app behavior. The Core app quality guidelines make this point indirectly through their emphasis on the latest Android version, a representative device set, and broader validation conditions. Real-device checks are what convert pre-release confidence into evidence that the product still works where it actually matters.

FAQ

Do teams need to retest after every iOS or Android update?

Yes, but the scope should match the size of change. Small patches may need only targeted checks, while major OS, framework, or toolchain updates require focused revalidation of critical flows.

Is simulator or emulator testing enough after mobile platform updates?

No. Simulators and emulators help early, but they do not reproduce full real-device behavior, permissions, background states, or hardware-specific risk. Real-device validation is still necessary after platform updates.

What metrics should teams monitor after a mobile app update?

Teams should track crash rate, ANR rate, cold start time, and device-cluster anomalies as a baseline. If login, checkout, notifications, or third-party integrations are business-critical, they should also watch failures in those flows and support-ticket patterns.

When should a rollout be paused after a mobile app update?

A rollout should be paused when post-release signals show a clear regression rather than random noise. Typical signs include a crash or ANR spike, repeated failures on a specific device family or OS version, or visible degradation in critical flows.

Conclusion

Mobile app stability is not something a team proves once before release and then assumes forever. It has to be revalidated as operating systems, frameworks, and toolchains change around the product. For teams that need a stronger approach to this part of delivery, Pinta WebWare can support a more structured validation model focused on mobile quality, release stability, and post-update risk control.