Web development

SEO optimization

CMS integration

Performance optimization

E-Commerce Solutions

How Third-Party Scripts Hurt Core Web Vitals on CMS Sites

Iliya Timohin

2026-03-20

Many Core Web Vitals problems on CMS sites do not begin with a poorly built website. They begin when third-party scripts, plugins, widgets, and apps gradually add weight, delay rendering, disrupt interaction, and introduce hidden regressions that are easy to miss at first. On eCommerce and content-heavy CMS websites, these issues often become visible after installs, plugin updates, release changes, or tracking adjustments, weakening page experience, SEO visibility, and conversion stability before the site appears obviously broken.

Third-Party Scripts as a Core Web Vitals Risk

Third-party functionality does not become risky because it exists, but because it enters the page’s rendering and interaction path. As Google explains in its guidance on Core Web Vitals and Google Search performance stability shapes both page experience and search visibility.

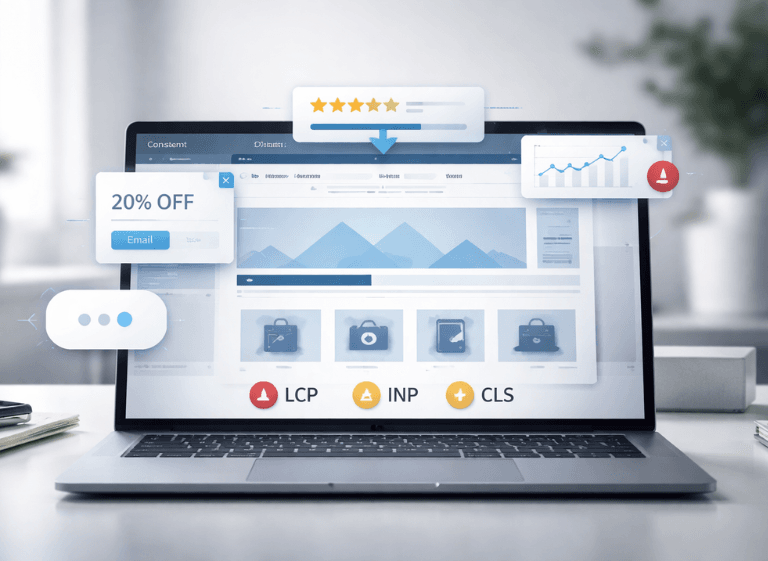

How Plugins Affect LCP, INP, and CLS

Third-party code can affect all three core metrics in different ways. It can delay LCP when important content waits behind external resources, weaken INP when extra JavaScript adds work before interactions are processed, and trigger CLS when late-loading elements change the layout after the page already appears usable.

Why Extra Features Increase Performance Cost

The main problem is cumulative risk. One added feature may seem minor, but each new dependency increases the chance that future installs, updates, and tracking changes will make performance less stable. Over time, third-party functionality stops being just “extra” and starts shaping how reliably the page loads and responds.

Third-Party Elements That Hurt Core Web Vitals

The risk becomes easier to understand when viewed through the elements businesses add most often. On CMS and eCommerce sites, the main pressure usually comes from engagement widgets, tracking layers, and visual storefront features.

Review Widgets, Popups, and Chat Tools

Review widgets, consent layers, popups, and chat tools often arrive as ready-made business features, but they also add their own JavaScript, styling logic, and rendering behavior. On product and landing pages, they can delay interaction readiness, shift visible content, and make the page feel less stable during high-intent sessions.

Tracking Scripts and Analytics Tags

Tracking layers are often treated as background additions, yet they still consume browser resources and compete with more important page tasks. Tag managers, analytics scripts, pixels, session recording tools, and marketing integrations can become a recurring source of INP and loading instability when they accumulate across the same templates.

Sliders and Embedded App Features

Media-heavy components deserve special attention on homepages, campaign pages, and product templates. Poorly configured sliders, embedded app blocks, and dynamic storefront features can delay hero rendering, interfere with image loading, and add extra JavaScript work before important content becomes visible. That is why image optimization for Core Web Vitals matters even more when visuals are controlled through external features, and why inefficient third-party JavaScript loading often turns a useful feature into a rendering bottleneck.

Table 1. Third-Party Elements and Their Core Web Vitals Risk

| Third-Party Element | CWV Risk | Typical Symptom | Business Impact |

|---|---|---|---|

| Review widget | CLS / INP | Shifted content or delayed interaction | Lower trust and weaker conversion confidence |

| Popup or consent layer | CLS / INP | Visible jumps or blocked actions | Interrupted journeys and more exits |

| Chat tool | INP | Laggy clicks and slower response | Less stable engagement |

| Analytics tags and pixels | LCP / INP | Slower rendering and heavier script execution | Less predictable UX |

| Slider or carousel | LCP / CLS | Late or unstable hero rendering | Lower engagement with key content |

Why CMS Sites Are More Prone to Core Web Vitals Regressions

CMS websites are more exposed to Core Web Vitals regressions because their performance is shaped by a modular system rather than by one tightly controlled codebase. Themes, plugins, apps, tracking layers, storefront modules, and custom additions often share the same templates, which means new functionality can affect page behavior far beyond the feature it was meant to add.

Plugin-Heavy CMS Architecture and Layered Code

This risk comes from architecture as much as from code volume. In many CMS builds, each added extension brings its own scripts, styles, requests, and rendering rules, yet those additions still compete inside the same page. That makes CMS performance more sensitive to loading order, template complexity, and shared dependencies than teams often expect.

WordPress, Shopify, Magento, OpenCart, and PrestaShop Risks

The pattern appears across platforms, even if the implementation differs. WordPress sites often accumulate plugins across content and marketing needs. Shopify storefronts can become app-heavy on product and collection templates. Magento, OpenCart, and PrestaShop builds face similar pressure when extension-driven functionality grows faster than performance governance. The result is a platform model in which regressions are easier to introduce because flexibility is built into the way features are added.

Lighthouse vs. CrUX and RUM After Release

Performance data can look inconsistent after release because different sources measure different things. On CMS sites with heavy third-party functionality, that difference matters: a page may appear acceptable in a controlled test while field data and real-user sessions tell a less stable story.

Lab Data vs. Field Data in Core Web Vitals

Teams need to distinguish between lab and field data. As explained in this overview of lab and field data differences, Lighthouse measures performance in a controlled environment and helps surface likely technical bottlenecks under fixed conditions. CrUX reflects aggregated Chrome user experience in the field, while RUM shows what happens across actual visits on a specific site.

Why These Sources Show Different Pictures

The gap exists because each source captures a different layer of reality. Lighthouse is useful for controlled debugging, CrUX shows broader page experience trends in search, and RUM reveals live template behavior on your own site. Taken together, they help teams avoid over-trusting one “good” score when third-party functionality behaves differently under real usage.

Table 2. Lighthouse vs. CrUX vs. RUM

| Source | What It Shows | Where It Helps | What It Can Miss |

|---|---|---|---|

| Lighthouse | Controlled lab performance | Early debugging and release checks | Real-user variability and delayed script behavior |

| CrUX | Aggregated field data from Chrome users | Page experience trends visible in search | Page-level details and site-specific diagnosis |

| RUM | Real-user sessions on your site | Post-release monitoring and template-level diagnosis | Broader public benchmark context |

Core Web Vitals Degradation Signals in SEO and UX

Third-party regressions do not stay inside performance dashboards. They surface as visible friction in page experience, weaker engagement quality, and less stable search performance signals.

Conversion Drops and Unstable Page Experience

On CMS and eCommerce pages, even small instability can disrupt user decisions. A late-loading popup, review block, consent layer, or promotional element can shift visible content, interrupt attention, or delay the moment when the page feels ready to use.

Performance Issues After Plugin Updates

A common symptom is that key templates feel worse even when no obvious feature is broken. Product pages, landing pages, and campaign pages may load less smoothly, respond more slowly, or feel less visually stable than before.

Why Slow Scripts Are Hard to Isolate

These symptoms are often difficult to trace back to one source. Teams may notice weaker responsiveness, unstable layouts, or unexplained conversion friction long before they can confidently identify which external dependency introduced the problem.

Signs That Third-Party Functionality Is Becoming a Real Risk

- Field performance weakens while basic lab checks still look acceptable.

- Conversion-sensitive pages feel less stable after plugin, app, or tag changes.

- Product, landing, or campaign templates perform worse than simpler pages.

- User friction grows around popups, delayed interaction, or shifting UI.

Why Core Web Vitals Regressions Appear After Release

Core Web Vitals regressions often appear after release because production behavior is more complex than a pre-release snapshot. On CMS and eCommerce sites, performance problems may surface only after updated code meets real traffic, page templates, and existing dependencies in production.

Changes That Trigger Delayed Regressions

Delayed regressions are often caused by routine release changes: CMS or framework updates, new integrations, consent or personalization changes, campaign elements, and infrastructure changes such as caching or CDN rules. Each of these can change how resources load, execute, or compete once the release is live.

Why Monitoring Matters After Release

Post-release monitoring matters because it verifies whether the page stays stable under real usage, not just whether it passed a release check. On plugin-heavy CMS sites, it helps teams catch gradual degradation before it spreads across business-critical templates.

Changes That Often Trigger Delayed Regressions

- CMS core or framework updates

- New or updated third-party integrations

- Consent, personalization, chat, or review changes

- Campaign, theme, or template changes

- CDN, caching, or infrastructure-level changes

When Plugin-Heavy Architecture Becomes Technical Debt

A plugin-heavy stack becomes technical debt when added functionality stops acting like a flexible advantage and starts limiting how safely the site can evolve. At that point, the issue is no longer just page speed but the growing cost of maintaining performance across releases, integrations, and business-critical templates.

When Third-Party Tools Weaken Site Performance

This shift usually shows up when each new app, plugin, or campaign element adds more uncertainty than value from a performance perspective. The stack may still deliver useful features, but it also becomes harder to update, harder to troubleshoot, and more likely to create regressions when multiple dependencies affect the same templates.

Why Custom Development Can Reduce Risk

Custom development helps when selected features have become too important to leave inside an overcrowded extension stack. Replacing some third-party functionality with lighter custom implementations can reduce dependency overlap, simplify release behavior, and make performance easier to govern over time.

Core Web Vitals as a Development Priority

Core Web Vitals are most useful when treated as an ongoing quality signal within the development process, not as a score to revisit only after problems become visible. On CMS and eCommerce websites, repeated change is normal, so performance needs to be evaluated as part of how the product is built and maintained.

Why Performance Control Belongs in Web Development

Stable Core Web Vitals depend on architectural choices, release discipline, and a process that checks how new features, integrations, and external dependencies affect page behavior over time. This is where broader performance optimization techniques matter most: not as one-time fixes, but as part of a development framework for protecting rendering stability, interaction quality, and predictable delivery.

For CMS and eCommerce teams, long-term Core Web Vitals stability depends less on occasional cleanup and more on making performance a standard part of planning, validation, and release control.

FAQ

Can third-party scripts hurt Core Web Vitals on a well-built CMS site?

Yes. Even a well-built CMS site can lose performance when third-party scripts add extra JavaScript, delay rendering, or shift visible content.

Why can Lighthouse look fine while real users still experience slow pages?

Because Lighthouse tests a controlled scenario. Real users introduce more devices, networks, conditions, and script behaviors than a lab check can show.

Which third-party elements most often cause CLS or INP issues?

The highest-risk ones are elements that inject interface changes late or add extra client-side work after the page starts rendering. In practice, that usually means interactive overlays, embedded engagement tools, and script-heavy marketing additions.

Why do Core Web Vitals regressions often appear after plugin or app updates?

Because updates can change script weight, loading order, dependencies, or content injection behavior even when the feature still appears to work.

When does plugin-heavy architecture become a real performance risk?

It becomes a risk when multiple plugins or apps shape how the same templates load, render, and respond.

Why is post-release monitoring important for CMS and eCommerce sites?

Because real traffic reveals issues that release checks may miss. It helps teams catch instability before it affects key templates, UX, and SEO signals.